Hyperplane separation theorem - Let A is closed polytope then such a separation exists. In the context of support-vector machines, the optimally separating hyperplane or maximum-margin hyperplane is a hyperplane which separates two convex hulls of points and is equidistant from the two. The Hahn–Banach separation theorem generalizes the result to topological vector spaces.Ī related result is the supporting hyperplane theorem. The authors observed that the survivability requirements increase the problem size dramatically and that in this case, the cutting plane algorithm only slightly improves the LP relaxation lower.

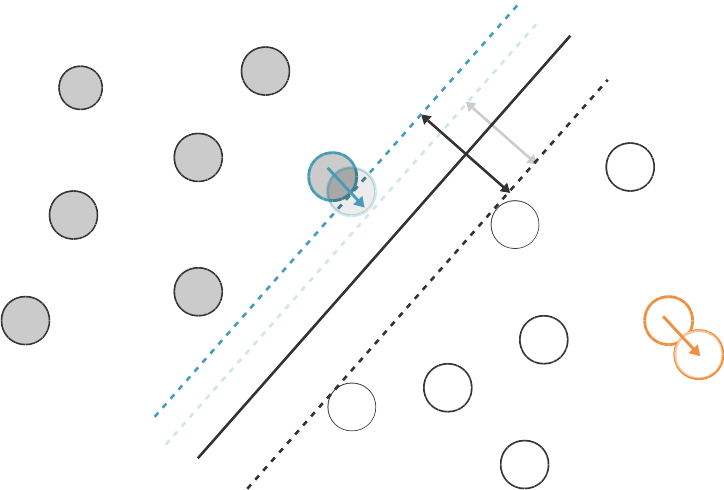

The hyperplane separation theorem is due to Hermann Minkowski. An axis which is orthogonal to a separating hyperplane is a separating axis, because the orthogonal projections of the convex bodies onto the axis are disjoint. In another version, if both disjoint convex sets are open, then there is a hyperplane in between them, but not necessarily any gap. In one version of the theorem, if both these sets are closed and at least one of them is compact, then there is a hyperplane in between them and even two parallel hyperplanes in between them separated by a gap. A separating hyperplane can be defined by two terms: an intercept term called b and a decision hyperplane normal vector called w. There are several rather similar versions. The value of class label here can be only either be -1 or +1 (for 2-class problem).In geometry, the hyperplane separation theorem is a theorem about disjoint convex sets in n-dimensional Euclidean space. Now, consider the training D such that where represents the n-dimesnsional data point and class label respectively. Now since all the plane x in the hyperplane should satisfy the following equation: Here b is used to select the hyperplane i.e perpendicular to the normal vector. These are commonly referred to as the weight vector in machine learning. Draw a random test point You can click inside the plot to add points and see how the hyperplane changes (use the mouse wheel to change the label). This hyperplane could be found from these 3 points only. Below is the method to calculate linearly separable hyperplane.Ī separating hyperplane can be defined by two terms: an intercept term called b and a decision hyperplane normal vector called w. The optimal separating hyperplane has been found with a margin of 2.00 and 3 support vectors. Generally, the margin can be taken as 2* p, where p is the distance b/w separating hyperplane and nearest support vector. Thus, the best hyperplane will be whose margin is the maximum. This distance b/w separating hyperplanes and support vector known as margin. The idea behind that this hyperplane should farthest from the support vectors.

Now, we understand the hyperplane, we also need to find the most optimized hyperplane. ISRO CS Syllabus for Scientist/Engineer Exam.ISRO CS Original Papers and Official Keys.GATE CS Original Papers and Official Keys.DevOps Engineering - Planning to Production.Python Backend Development with Django(Live).Android App Development with Kotlin(Live).Full Stack Development with React & Node JS(Live).Java Programming - Beginner to Advanced.Data Structure & Algorithm-Self Paced(C++/JAVA).However, you can use 2 features and plot nice decision surfaces as follows. This is because the dimensions will be too many and there is no way to visualize an N-dimensional surface. Regardless of dimensionality, the separating axis is always a line. Data Structures & Algorithms in JavaScript 1 Answer Sorted by: 12 You cannot visualize the decision surface for a lot of features. In collision detection, the hyperplane separation theorem is usually used in the following form: Separating axis theorem Two closed convex objects are disjoint if there exists a line ('separating axis') onto which the two objects' projections are disjoint.Data Structure & Algorithm Classes (Live).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed